A Bad App

A long time ago I wrote an app called "Storytellers". With AI help, that is. The idea at first was, like in a game, to take turns with the AI writing the next sentence. One me, one you. And to see where that leads.

I quickly realized that this is fun, and that maybe one should make an app out of it, to write stories with the help of AIs.

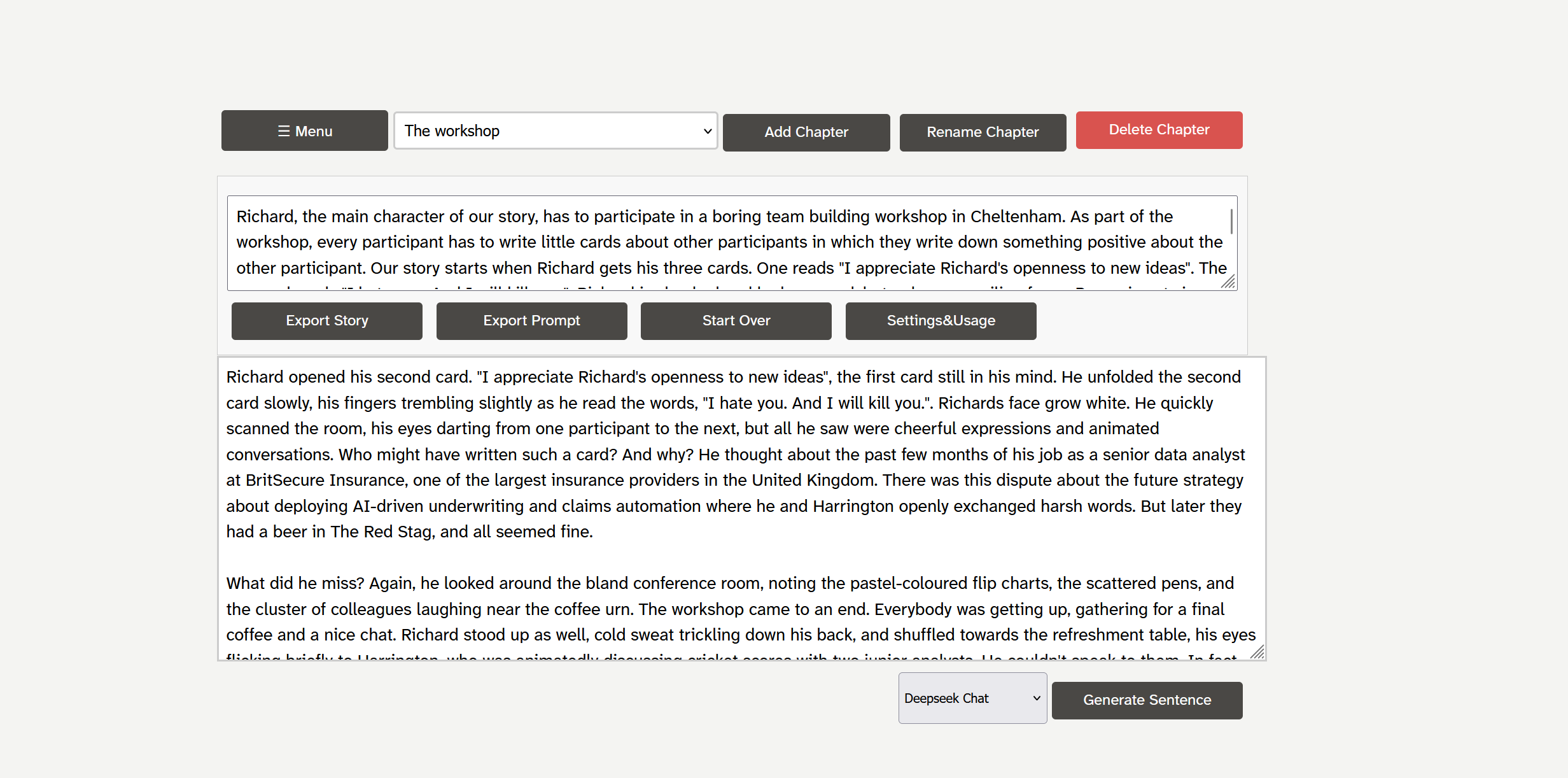

Not “Let the AI write,” not handing authorship over to the AI. That doesn’t work, and it’s no fun either. But if you’re not a highly structured writer, if you let yourself drift, then every now and then you get to the point where you ask yourself how the story should continue. Maybe a tip from the AI helps. And yet the first version of Storytellers was an ugly, overloaded thing. Feature after feature added. The actual text took up maybe 20% of the screen. Possibilities everywhere to influence AI generation, choose different LLMs, import, export, image generation.

I also started a few nice stories.

Then for a long time I did nothing, left the app lying around unused.

When three days ago I felt like continuing a story, I started the app and had no desire at all to reread my way back into the story. So I exported it as text and read it in Apple’s Pages. And that was much nicer.

The Restart

Since I still think the core idea of the app—using the AI to write exactly one more sentence, knowing what the further content of the story will be, and to improve or rephrase individual passages—is great, I had to fundamentally redesign the app so that it feels more like Pages and less like my app. So that the text is at the center and nothing else. It has to be really fun to write with it.

There are approaches like the iOS app "Bear", just not with an AI as a co-author.

The Discussion

The whole app emerged in a long and unexpectedly exciting discussion with ChatGPT 5.2. After the AI understood what I wanted to achieve, every feature was broken down in detail, discussed, always with this in mind: the features should not push themselves forward, not distract, but live in a very reduced and natural way with the text. We argued about buttons, spacing, gestures, and the courage to leave things out. The AI was, yes, I dare say it, very creative in its solutions and its prompts for thought.

In the end, after certainly an hour of discussion, I had a clear idea of what I wanted, and had discussed it thoroughly with ChatGPT. It was natural to ask the AI to export a Markdown document with a specification that describes to an “implementing LLM” exactly what to build and how to build it.

The Implementation 1

Took the Markdown, put it into a new directory, and asked Cursor + Opus 4.6 to build it.

And broadly failed: the AI burned tokens worth 10 dollars, but the app simply doesn’t work. All improvements simply don’t make the central concept—a plain “gem” in the shape of a diamond at the bottom edge of the screen—show up.

At some point it dawns on me: I answered ChatGPT’s question of whether this should be an “on-device, without backend, offline-capable” app with yes. And Opus headed into the nightmare of developing progressive web apps, where the unbearable

This kind of dawning, by the way, is still what the LLMs can’t do. In that respect they’re simply too machine-like, not able to take a step back and draw on past experience.

So: abort mission, asked ChatGPT to rewrite the specification so that an app with a node.js backend, with authentication, should be built. After lengthy follow-up discussions about the fact that a login screen is actually horrible UX, and how to get around it and still be sure, we arrived at an approach in which I (app admin) can, on request, generate an invitation link that initializes the session for the user.

The user only wants to use the app, gets a link, clicks it, and is in the text. Very nice.

The Implementation 2

Then generated the Markdown file again, and started over in a new directory, this time with GPT 5.3 codex, because Claude somehow didn’t respond.

The app worked right away and was actually a nice first draft.

From first idea to first working version took about two hours, maybe three. Then another few hours, maybe another two for further improvements, and I had the simple, elegant writing app with AI, exactly as I had hoped.

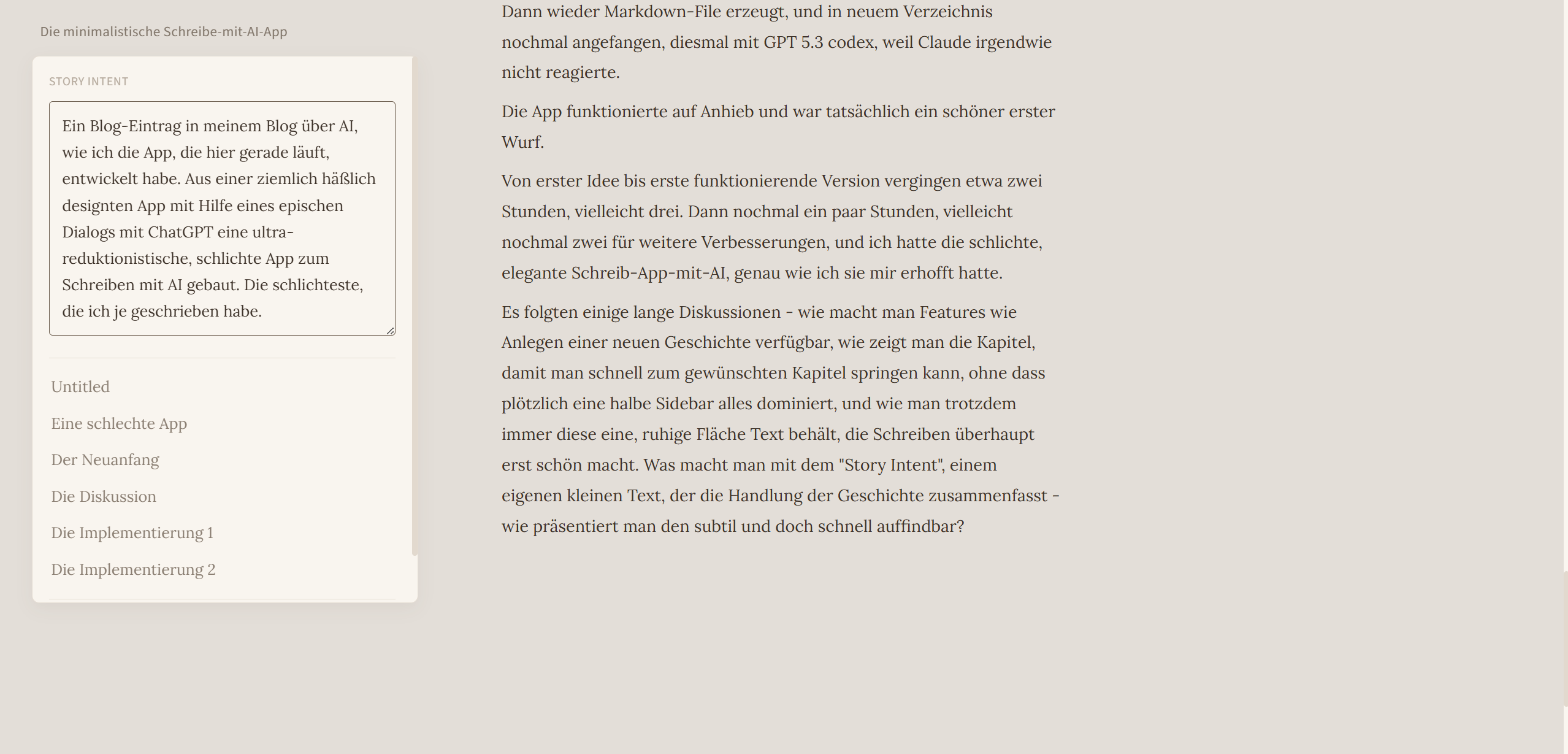

Some long discussions followed—how do you make features like creating a new story available, how do you show the chapters so you can quickly jump to the desired chapter without suddenly half a sidebar dominating everything, and how you still always keep that one calm surface of text that makes writing beautiful in the first place. What do you do with the “story intent,” its own little text that summarizes the plot of the story—how do you present it subtly yet quickly findable?

And Now?

The app is there, I like using it. Does it need to be able to do more? It could do a lot more. A sidebar with AI summaries of the characters and the places. An auto-update of the story intent from the story. But that all bloats the app, and the simplicity is what’s fun (I’m actually writing this blog post with the app).

What Did I Learn?

I learned that reduction doesn’t come from minimalist romanticism, but from persistent dialogues in which the model lays alternatives in front of me and I consistently cut. And I learned that my opinion that I am clearly the author of my little apps may not be so tenable anymore today. I made all the essential decisions. But ChatGPT guided me, gave me 3–5 options. Did it maybe even steer me in a direction?

And what does that mean with regard to copyright? If a model not only delivers code building blocks, but also helps determine the shape of the interface, the tone of the interaction, and the trade-offs between options, whose signature remains in the end?

The questions won’t get any fewer with AIs in 2026.

ps. I fed two chapters of a story I'm writing with LLMs into two different "AI slop detectors". Both tell me it's 100% human written. I guess that's the point. The app encourages you to write; AI is there if you need assistance, but the result stays your text.